Arch Server Progress

-

One of the things I like about tech/IT/OSs is that there is always something new to learn and that most likely someone else already knows what you need to learn. So I am going to try and make this not confusing and lay this out in steps. I found that Arch Linux does need to be rebooted every once in awhile. There is debate on the interwebs that it should be rebooted as soon as a new kernel version is installed vs it can wait for when you have time or when the server can be down with less impact vs restarting it once a year or so. My Arch Web Server hasn’t been rebooted for over 250 days. So when I was working on making sure I would be able to use my current Virtualbox Installs in Arch Linux Desktop (KDE Plasma) I found how to check what version is installed vs what version is currently running.

Type in console -

Kernel Version Running:uname -roruname -afor more details

Current Kernel Version Installed:pacman -Qi linuxIf those two versions are different, then you aren’t running the latest version and would need to reboot to run the latest version that is installed. It is argued that security is important, which yes I believe it is, however with how secure Linux is to begin with maybe restarting immediately isn’t necessary unless there is something very major happening.

I would be interested in what everyone’s thoughts are (especially @phenomlab) in regard to this. Restart ASAP, when convenient or once a year or so?

What led me to find this out, was getting qemu and Virtual Machine Manager to work in Linux for converting and importing the VirtualBox image. It was successful.

Those steps:

- Appliance Export on the virtual machine you want to move over

- Copy new image to Linux machine - I used scp in a windows terminal to copy the file to the Linux machine

- Go into Linux system and using the command console extract the file

- Console to convert file to once qemu can read

- Use the Virtual Machine Manager to import the converted image and run virtual machine

After following these steps, it was successful. I had a virtual machine running in a virtual machine running on windows 10. It was fun to figure that out.

-

One of the things I like about tech/IT/OSs is that there is always something new to learn and that most likely someone else already knows what you need to learn. So I am going to try and make this not confusing and lay this out in steps. I found that Arch Linux does need to be rebooted every once in awhile. There is debate on the interwebs that it should be rebooted as soon as a new kernel version is installed vs it can wait for when you have time or when the server can be down with less impact vs restarting it once a year or so. My Arch Web Server hasn’t been rebooted for over 250 days. So when I was working on making sure I would be able to use my current Virtualbox Installs in Arch Linux Desktop (KDE Plasma) I found how to check what version is installed vs what version is currently running.

Type in console -

Kernel Version Running:uname -roruname -afor more details

Current Kernel Version Installed:pacman -Qi linuxIf those two versions are different, then you aren’t running the latest version and would need to reboot to run the latest version that is installed. It is argued that security is important, which yes I believe it is, however with how secure Linux is to begin with maybe restarting immediately isn’t necessary unless there is something very major happening.

I would be interested in what everyone’s thoughts are (especially @phenomlab) in regard to this. Restart ASAP, when convenient or once a year or so?

What led me to find this out, was getting qemu and Virtual Machine Manager to work in Linux for converting and importing the VirtualBox image. It was successful.

Those steps:

- Appliance Export on the virtual machine you want to move over

- Copy new image to Linux machine - I used scp in a windows terminal to copy the file to the Linux machine

- Go into Linux system and using the command console extract the file

- Console to convert file to once qemu can read

- Use the Virtual Machine Manager to import the converted image and run virtual machine

After following these steps, it was successful. I had a virtual machine running in a virtual machine running on windows 10. It was fun to figure that out.

@Madchatthew The general rule of thumb with Linux is that every kernel update attracts a reboot. The reason for this is that these updates cannot be affected with the kernel in use, so a reboot is the only way to ensure you have the best protection.

You cannot upgrade the Linux kernel without rebooting. At least not in a way you would think.

There are technologies like systemtap, dtrace, kpatch or kgraft which allow for small modifications of kernel code. These are meant as short “workarounds” to prevent security threats, and to typically to give you more time until you plan a maintenance window for reboot.

You will have to reboot at some point and should design your infrastructure for that (in the commercial sense).

-

@Madchatthew The general rule of thumb with Linux is that every kernel update attracts a reboot. The reason for this is that these updates cannot be affected with the kernel in use, so a reboot is the only way to ensure you have the best protection.

You cannot upgrade the Linux kernel without rebooting. At least not in a way you would think.

There are technologies like systemtap, dtrace, kpatch or kgraft which allow for small modifications of kernel code. These are meant as short “workarounds” to prevent security threats, and to typically to give you more time until you plan a maintenance window for reboot.

You will have to reboot at some point and should design your infrastructure for that (in the commercial sense).

@phenomlab this makes a lot of sense. I don’t mind rebooting the server then. I am just glad that I won’t have to reinstall the operating system at some point like I do with Debian and the others. I don’t think you have to reinstall the operating system with Fedora either. It would be interesting to run a web server off of Fedora.

I will have to start implementing reboots into my upgrading schedule to make sure the latest security patches are in place. I am still happy with Arch though. I am having a great experience so far with it and I hope that it stays that way.

-

I updated the server, waited about 30 seconds, probably closer to 20 and was able to access the server and the sites were back up and running with no flaws. Now I am running the current Linux kernel.

-

So my Brother-In-Law messaged me that he wasn’t able to send or receive email. I thought, well that is weird because it should all be up and running. So I remote into the server and start doing some investigation and I found that somehow Dovecot became disabled. So I used

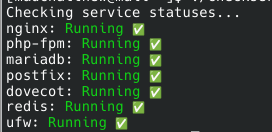

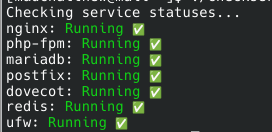

sudo systemclt enable dovecotand thensudo systemctl start dovecotand finallysudo systemctl status dovecotto verify that the service is enabled and started.So the lesson I learned from this is that, once you update, reboot to be on the latest kernel, is to go through and make sure all of your services are running. Normally I would just go to the website and make sure that both websites are displaying and functioning as intended and call it a day. I should have been checking all of the services from the beginning. Now I will be putting this in practice.

This brings me to the next project with this server, is that now I plan on making a bash script that I can run that will go through and check each service and return a “Service Running” message. “Service” would name the service of course, so whether it is NGINX, FPN, Postfix, Dovecot and any others would show that message. Just to make that process quick and easy and less painful of typing the command a bunch of times.

-

So my Brother-In-Law messaged me that he wasn’t able to send or receive email. I thought, well that is weird because it should all be up and running. So I remote into the server and start doing some investigation and I found that somehow Dovecot became disabled. So I used

sudo systemclt enable dovecotand thensudo systemctl start dovecotand finallysudo systemctl status dovecotto verify that the service is enabled and started.So the lesson I learned from this is that, once you update, reboot to be on the latest kernel, is to go through and make sure all of your services are running. Normally I would just go to the website and make sure that both websites are displaying and functioning as intended and call it a day. I should have been checking all of the services from the beginning. Now I will be putting this in practice.

This brings me to the next project with this server, is that now I plan on making a bash script that I can run that will go through and check each service and return a “Service Running” message. “Service” would name the service of course, so whether it is NGINX, FPN, Postfix, Dovecot and any others would show that message. Just to make that process quick and easy and less painful of typing the command a bunch of times.

@Madchatthew Interesting how Dovecot was suddenly disabled. Anything of interest in

/var/log/dovecot.logor/var/log/mail.log? -

@Madchatthew Interesting how Dovecot was suddenly disabled. Anything of interest in

/var/log/dovecot.logor/var/log/mail.log?@phenomlab this is another lesson I need to learn is to check the logs. I am usually the person that sees what the status is of the service, try to restart it and go from there, then start googling. I think though that looking at the log files first would save me a lot of time from googling and such. Or at least being able to know what to google for to help fix an issue faster. I am doing the process backwards. So I am going to remote in and look at those logs to see what they say in regard to the Dovecot service being disabled. There is a small chance that I forgot to enable the service, but I am pretty sure I enabled it.

I will look at those logs and update here what I find out.

-

@phenomlab this is another lesson I need to learn is to check the logs. I am usually the person that sees what the status is of the service, try to restart it and go from there, then start googling. I think though that looking at the log files first would save me a lot of time from googling and such. Or at least being able to know what to google for to help fix an issue faster. I am doing the process backwards. So I am going to remote in and look at those logs to see what they say in regard to the Dovecot service being disabled. There is a small chance that I forgot to enable the service, but I am pretty sure I enabled it.

I will look at those logs and update here what I find out.

@Madchatthew You’d be surprised at the amount of times I’ve setup a service and forgot to enable it!

-

@Madchatthew You’d be surprised at the amount of times I’ve setup a service and forgot to enable it!

@phenomlab yes and I am leaning more towards me forgetting to enable the service. Especially if there is nothing in the logs that specifically says it was disabled for some reason

-

So, I know that AI is being used for all sorts of nefarious things out this world, but I also believe there are more people using AI for good that for bad things. One such case in point, I just used Chatgpt to write a bash script so I can check to make sure services are running. And let me tell you, it did a beautiful job!

I wrote out the following prompt:

Write a bash script for Arch linux to check if the following services are running: nginx php-fpm mariadb postfix dovecot For each of the checks, display in the terminal if they are running or stoppedI am sure there are others ways to do this, but it looks like it took it’s queue on my “For each of” and wrote a for each statement. In the prompt in Chatgpt none of the wording is bolded or colored in blue. Which is perfectly fine. I added Redis and UFW to the code in my script file to make sure those are running as well.

Then Chatgpt told me to make the script executable using

chmod +x check_services.shand to double check to make sure that it chose the correct service names and to usesystemctl list-units --type=serviceto get the exact names of the services and change accordingly.So I did that and everything checks out. Then, Chatgpt prompted me and said if you would like to make this color coded let me know. So I did the following prompt:

This work perfectly. Please include color-coded outputThis was the final code that Chatgpt outputed:

#!/bin/bash # Colors GREEN="\033[0;32m" RED="\033[0;31m" NC="\033[0m" # No Color # List of services to check services=( nginx php-fpm mariadb postfix dovecot redis ufw ) echo "Checking service statuses..." # Loop through each service for service in "${services[@]}"; do status=$(systemctl is-active "$service") if [[ "$status" == "active" ]]; then echo -e "${service}: ${GREEN}Running ✅${NC}" else echo -e "${service}: ${RED}Stopped ❌${NC}" fi doneSo upon running this in the terminal when remoted into the server

./checkservicesI get the following output:

I am happy with the results and now have a super easy way to double check and make sure all of the services are running after I reboot the server when a new kernel is installed.

-

So, I know that AI is being used for all sorts of nefarious things out this world, but I also believe there are more people using AI for good that for bad things. One such case in point, I just used Chatgpt to write a bash script so I can check to make sure services are running. And let me tell you, it did a beautiful job!

I wrote out the following prompt:

Write a bash script for Arch linux to check if the following services are running: nginx php-fpm mariadb postfix dovecot For each of the checks, display in the terminal if they are running or stoppedI am sure there are others ways to do this, but it looks like it took it’s queue on my “For each of” and wrote a for each statement. In the prompt in Chatgpt none of the wording is bolded or colored in blue. Which is perfectly fine. I added Redis and UFW to the code in my script file to make sure those are running as well.

Then Chatgpt told me to make the script executable using

chmod +x check_services.shand to double check to make sure that it chose the correct service names and to usesystemctl list-units --type=serviceto get the exact names of the services and change accordingly.So I did that and everything checks out. Then, Chatgpt prompted me and said if you would like to make this color coded let me know. So I did the following prompt:

This work perfectly. Please include color-coded outputThis was the final code that Chatgpt outputed:

#!/bin/bash # Colors GREEN="\033[0;32m" RED="\033[0;31m" NC="\033[0m" # No Color # List of services to check services=( nginx php-fpm mariadb postfix dovecot redis ufw ) echo "Checking service statuses..." # Loop through each service for service in "${services[@]}"; do status=$(systemctl is-active "$service") if [[ "$status" == "active" ]]; then echo -e "${service}: ${GREEN}Running ✅${NC}" else echo -e "${service}: ${RED}Stopped ❌${NC}" fi doneSo upon running this in the terminal when remoted into the server

./checkservicesI get the following output:

I am happy with the results and now have a super easy way to double check and make sure all of the services are running after I reboot the server when a new kernel is installed.

@Madchatthew Yes, ChatGPT excels in this sense, and does take queues from key items you describe, which sets it apart from others. I actually have a monthly paid subscription to ChatGPT because I use it more frequently these days for something else I have in the pipeline I’m working on.

-

@Madchatthew Yes, ChatGPT excels in this sense, and does take queues from key items you describe, which sets it apart from others. I actually have a monthly paid subscription to ChatGPT because I use it more frequently these days for something else I have in the pipeline I’m working on.

@phenomlab That is awesome! I think I am going to start using Chatgpt to help with some programming and if nothing else, it should be able to help me understand how to program some things or rather aspects of an app whether web or otherwise.

-

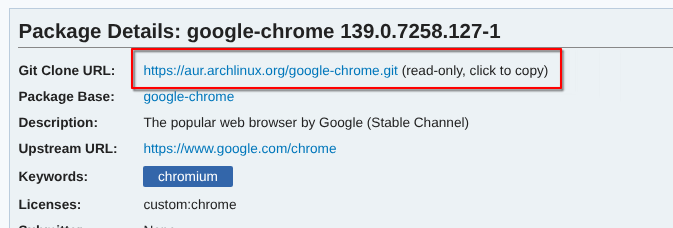

One of the package install options is the AUR. These are programs that can’t be installed using pacman, and are apps that can be installed. Usually the options are to install

yayorparu. I am one that doesn’t like extra programs on their computer if I don’t need it. It is another app that can have issues on an upgrade. Of course there is no doubt that typingsudo yay -S google-chromeis way easier and quicker.So how do you install from aur.archlinux.org with either of those apps. Easy, lets go through it step by step.

-

Go to the website

aur.archlinux.org -

We will use google-chrome for an example. I use chrome off the AUR so that I can log in and have all my bookmarks and extensions sync.

-

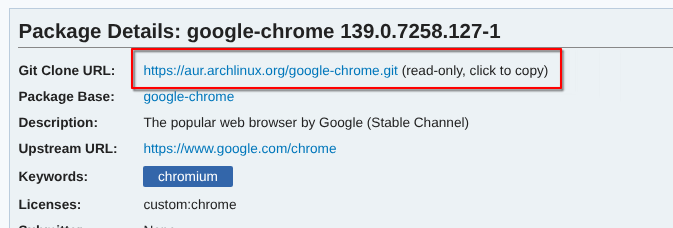

On the page for google-chrome on the AUR there will be a link next to

Git Clone URL:. -

Click on the link, which is read only to copy the link.

-

Go to your terminal and type

cd Download- this changes to the Download directory. -

Type

git clone 'right click and paste here'the link that you clicked on to copy. -

Press Enter and now the contents will be downloaded.

-

Change directory to the new one that appears, which in this case would be

google-chrome -

Once in this directory, now type

makepkg -s -iand it will install after updating packages and compiling. The -s will install missing dependencies and -i will tell makepkg to install after a successful build.

So now that you have been using good chrome and it says to restart to install the latest update and you do that, but no updates install. That is where you would need to type

sudo yay -U google-chrometo upgrade. Or I prefer to do the following.-

Go to the Downloads folder then to the program folder that you want to update in the terminal. In this case

Downloads/google-chrome -

Type

git pull -

Type

makepkg -s -iafter git is done pulling down the updates. -

Go through the install process after a successful build.

You will need to put in your password a couple of times and that is normal. Hope this helps and thank you!

-

-

One of the package install options is the AUR. These are programs that can’t be installed using pacman, and are apps that can be installed. Usually the options are to install

yayorparu. I am one that doesn’t like extra programs on their computer if I don’t need it. It is another app that can have issues on an upgrade. Of course there is no doubt that typingsudo yay -S google-chromeis way easier and quicker.So how do you install from aur.archlinux.org with either of those apps. Easy, lets go through it step by step.

-

Go to the website

aur.archlinux.org -

We will use google-chrome for an example. I use chrome off the AUR so that I can log in and have all my bookmarks and extensions sync.

-

On the page for google-chrome on the AUR there will be a link next to

Git Clone URL:. -

Click on the link, which is read only to copy the link.

-

Go to your terminal and type

cd Download- this changes to the Download directory. -

Type

git clone 'right click and paste here'the link that you clicked on to copy. -

Press Enter and now the contents will be downloaded.

-

Change directory to the new one that appears, which in this case would be

google-chrome -

Once in this directory, now type

makepkg -s -iand it will install after updating packages and compiling. The -s will install missing dependencies and -i will tell makepkg to install after a successful build.

So now that you have been using good chrome and it says to restart to install the latest update and you do that, but no updates install. That is where you would need to type

sudo yay -U google-chrometo upgrade. Or I prefer to do the following.-

Go to the Downloads folder then to the program folder that you want to update in the terminal. In this case

Downloads/google-chrome -

Type

git pull -

Type

makepkg -s -iafter git is done pulling down the updates. -

Go through the install process after a successful build.

You will need to put in your password a couple of times and that is normal. Hope this helps and thank you!

@Madchatthew Another great guide - thanks.

-

-

@Madchatthew Another great guide - thanks.

@phenomlab Thank you! And you are welcome! I had struggled with this the first couple times that google chrome had an update. Then I finally got it that I have to do that each time and that it isn’t done automatically.

-

@phenomlab Thank you! And you are welcome! I had struggled with this the first couple times that google chrome had an update. Then I finally got it that I have to do that each time and that it isn’t done automatically.

@Madchatthew This is one of the things that I always loathe about unmaintained repositories. They are so easily abandoned these days and it’s often not until the months have gone by where you realise your browser is woefully out of date because the repository no longer has anyone lovingly updating it.

-

@Madchatthew This is one of the things that I always loathe about unmaintained repositories. They are so easily abandoned these days and it’s often not until the months have gone by where you realise your browser is woefully out of date because the repository no longer has anyone lovingly updating it.

@phenomlab Yeah I am with you there. If they stop maintaining the google chrome one I will have to import my bookmarks and stuff into a different one and switch. I hoping they don’t stop maintaining it though.

Now some software once it is installed will have an update feature so you don’t have to do that every time, but unfortunately, the google chrome one doesn’t.

-

@phenomlab Yeah I am with you there. If they stop maintaining the google chrome one I will have to import my bookmarks and stuff into a different one and switch. I hoping they don’t stop maintaining it though.

Now some software once it is installed will have an update feature so you don’t have to do that every time, but unfortunately, the google chrome one doesn’t.

@Madchatthew said in Arch Server Progress:

Now some software once it is installed will have an update feature so you don’t have to do that every time, but unfortunately, the google chrome one doesn’t.

Yes, that was always the case with the Ubuntu repository for Chrome. PITA.

-

@Madchatthew said in Arch Server Progress:

Now some software once it is installed will have an update feature so you don’t have to do that every time, but unfortunately, the google chrome one doesn’t.

Yes, that was always the case with the Ubuntu repository for Chrome. PITA.

-

So I have to come on here and celebrate now! So I was ignoring the Dovecot upgrade in Pacman and decided that it was time to upgrade Dovecot. The only problem with that, is they revamped the whole thing and there are major breaking changes. I had version 2.3 and now am running version 2.4.

I have spent over 18 hours total getting all the config files setup properly and running. During this though I found that I had a couple of things setup the way I shouldn’t have and was able to modify them to be better. Now that I have the new Dovecot version setup, I can now look into getting some extra features to work with it, like better spam protection and quota amounts and some other things.

I am very happy and pleased with the result. I can email my gmail account and my wife’s gmail account and pretty much get the emails instantaneously on both sides. Now I can setup my website to be able to send mail when a purchase is made and that sort of thing.

I am stoked!

By the way, check out my webpage so far and let me know what you think. It is a wordpress website and I think I am going with SureCart for the store. I know the popular one to go with is WooCommerce, but most of the plugins that I need cost a lot of money to use. With SureCart I can do pretty much everything with it the way it is. I have to finagle a couple of things, but better than paying hundreds of dollars to use woocommerce the way I want. At least for now.

If anyone has any other recommendations that are free and open source ecommerce solutions let me know. I will check them out. So the website is https://graceunboundbindery.com

Hello! It looks like you're interested in this conversation, but you don't have an account yet.

Getting fed up of having to scroll through the same posts each visit? When you register for an account, you'll always come back to exactly where you were before, and choose to be notified of new replies (either via email, or push notification). You'll also be able to save bookmarks and upvote posts to show your appreciation to other community members.

With your input, this post could be even better 💗

Register LoginRelated Topics

-

-

-

-

-

nginx can't start again

Moved Solved Configure -

-

-